I had decided to follow an Oxford University Summer School (2026) on the subject “Understanding Space and Time”.

One of the recommended reading was “Einstein’s Cosmos” by Michio Kaku“. A book subtitled “How Albert Einstein’s Vision Transformed Our Understanding of Space and Time”.

I have decided to create a book review along the lines of personal revision notes. I’ve tried to hit the key points, and I’ve added numerous links to videos describing many of the scientific developments associated with Einstein and with the scientific developments of the time.

I always wonder why everyone uses an old photo of Einstein, when his most important work was done when he was much younger! Still it’s a nice colourful cover.

What the reviewers wrote?

Reviews were generally positive about this book. Many reviewers called it a good, nontechnical introduction to Einstein’s work that’s accessible to general readers interested in science history. The author is often praised for makes complex ideas understandable, and for using vivid explanations and analogies. One reviewer described it as a fresh, highly visual tour through Einstein’s legacy.

It has also been criticised for being very basic, too brief, and without technical depth. However a few readers admitted they struggled with parts of it because they didn’t already have some physics background. Yet others felt that the book was too light for serious learners, e.g. oversimplifying or prioritising narrative over rigorous detail.

What did I think of the book?

Let’s judge the book by its cover, i.e. the blurb on the outside back cover of the paperback.

There is mention of “humble beginnings”, “unemployed dreamer”, “hopeless loser”, “unpromising early career”, and finally “20th-century cultural icon”. Cultural icon certainly, for the rest I remained totally uninterested, and totally uninspired.

We also see “scientifically interesting”, fascinating read”, “enlightening book”, “refreshing look”, etc. My throughs are “interesting” certainly, “enlightening” occasionally, but “fascinating” no. Some people considered the book “a nontechnical introduction to Einstein’s work that’s accessible to general readers interested in science history”. I can’t think that this is true. Scientific topics are mentioned and described, but not well explained. I can’t see that it is accessible to the “general reader”, who (in the US) reads up to 5 book per year, mostly fiction, with some current affairs/politics. Although sticking Einstein on the cover will certainly be a crowd pleaser, I fear that most “readers interested in science history” won’t get past the first two chapters, and will be put off reading similar types of books.

I have not read other books about Einstein, so I can’t comment on “refreshing”, but if this book is truly refreshing, then I have no intention of reading the others.

There is a claim that the author is a “genial populariser”, frankly on this point I was truly disappointed. I do think that criticising the author for being very basic, too brief, and without technical depth, is unfair. In fact I would have liked him to use a little more of his popularisers magic wand on some of the more difficult to grasp concepts. I was disappointed that the key role of experiment and experimenter was not well exploited. I find it amazing that there were no images, graphs, drawings, etc. to try to explain, and not just mention.

I don’t really know if this is a criticism, but I learned more from what was not in the book, than what was in it. I read around some topics, I worked on trying to understand ideas, events, etc. (and with little help from the book itself), and I viewed and added links in my revision notes to numerous videos that I found useful.

The Preface

In the preface its claimed that recent Nobel Prize winners have been simply finding the crumbs that Einstein left on his table. Notably:-

- In 1993 Russell A. Hulse and Joseph H. Taylor Jr. won the prize “for the discovery of a new type of pulsar, a discovery that has opened up new possibilities for the study of gravitation“.

- In 2001 Eric A. Cornell, Wolfgang Ketterle, and Carl E. Wieman won the prize “for the achievement of Bose-Einstein condensation in dilute gases of alkali atoms, and for early fundamental studies of the properties of the condensates“.

- In 2020 Roger Penrose won part of the prize “for the discovery that black hole formation is a robust prediction of the general theory of relativity“.

Part I - RACING THE LIGHT BEAM

Physics Before Einstein

It starts with the claim that Newton and Einstein were able “to visualise in a simple picture the secrets of the universe”. This tells the reader, more or less, where this book is situated in the spectrum between genuine scientific understanding and simplified, almost illustrative storytelling.

The book mentioned that in 1666 Newton would propose a new mechanics based upon forces. I thought his Philosophiæ Naturalis Principia Mathematica was published 1687. The book also mentions that Newton invented the reflecting telescope, whereas I understood that the principles were known earlier, and that Newton was first to build a functional reflecting telescope in 1668.

The book also writes that Newton used the laws of gravity to calculate the motion of Halley’s comet and the Moon. I thought that Edmond Halley was the first person to correctly calculate the periodic motion of the comet that now bears his name. However, Newton did provided the theoretical framework that made that possible, and Newton did apply his laws directly to the orbit of the Moon.

The book also mentions that the same Newtons laws of gravity were used by NASA to guide the space probes past Uranus and Neptune. I guess this refers to Voyager 2, which it is true used the underlying equations of motion from Newton, but in addition also had to make corrections for “small non-gravitational forces” (e.g. solar radiation pressure, thruster impulses, thermal recoil force, gas leaks, solar winds, etc.), and relativistic corrections were included in signal timing and tracking. Without these additional corrections the probes would have been off-course after the 10 year flight.

Newton unified the observable phenomena of gravity on Earth with the observable laws of behaviour of celestial bodies in space based on an absolute reference frame of space and time, and his laws remained intact for nearly 200 years.

It was James Clerk Maxwell who brought together the understandings of the observable phenomena of magnetism, electricity and light (and more broadly, the spectrum of electromagnetic radiation). Maxwell’s equations describe electric and magnetic fields rather than forces between masses, and are conceptually distinct from Newton’s laws. However, they were formulated within a classical (Newtonian) view of space and time, and when applied to charged particles, their effects are incorporated into Newton’s second law to determine motion.

Classical electrodynamics includes fields defined by Maxwells equations. Those fields exert a force on charges, and the motion of charges follows Newton’s law.

One fundamental difference is that gravity acts instantly over all space, whereas magnetic and electric fields take time and move at a definite velocity. Fields, unlike forces, allow vibrations that travel at a definite speed. It’s worth mentioning that static fields (like the electric field of a stationary charge) are not “moving”, but any change in those fields (e.g. moving a charge) propagates outward at speed of light.

An electron has an electric field extending through space, and when it moves (changes velocity or direction) its electric field will change and that change will spreads outward as a disturbance at the speed of light, and will be felt elsewhere after a delay consistent with the speed of light, not instantly. Maxwell would claim that electric and magnetic fields were light.

The above video tries to explain what electromagnetism is. There are more detailed videos that immediately start looking at the equations that describe electromagnetic fields. For those people interested in those equations, check out Griffith Electrodynamics Lectures.

Many people thought physics was “complete”, until the arrival of relativity and quantum theory. Many also supported the idea of an absolutely stationary, weightless, invisible, incredibly strong, but undetectable “aether” which allowed waves to travel through “nothing”.

The Early Years

Chapter Two covers the early years of Einstein’s life, of which I have no interest. But it does introduce a question…

Imagine running alongside a light beam, what would it look like? If you travel at the same speed as someone in another car, then they would look perfectly stationary to you (they would look “at rest”). So Einstein thought that if you ran alongside a light beam, it also would appear “at rest”. So the light would not oscillate in time, but how can a wave be frozen? If frozen light is impossible, it must be impossible to catch up with light.

It would appear that Einstein looked at the world, not with equations, but with simple physical pictures (an ability I much appreciate).

Einstein would later realise that according to Maxwell, light beams always travel at the same velocity, no matter how fast the observer moves. So you could never catch up with a light beam.

Special Relativity and the "Miracle Year"

There is a mention of Ernst Mach, of Mach number fame, who believed that relative motion could be measured, but absolute motion (i.e. absolute space and absolute time) could not. He also questioned the existence of aether, where there was no experimental evidence of its existence. The experiment of Michelson and Morley showed that the speed of light was always a constant, and “aether” could not be real.

Returning to someone chasing a light beam, an observer would see our “chaser” almost catching the light beam, but the “chaser” him/her self would say that the light beam sped away, and they could never have caught it. So two people saw the same event in totally different ways. Was Newton’s concept of absolute space and time incorrect, or was it Maxwell’s constancy of the speed of light?

Einsteins answer was that time could beat at different rates depending upon how fast you move. The faster you move, the more time slowed down. This is the theory of relativity. This meant that for our “chaser” of light beams, time slowed down, but for the external observer it did not. The result was space contraction and time dilation, called the Lorentz transformation, and there was no need to invent the “aether”. The scientific article (published in 1905) was called “On the Electrodynamics of Moving Bodies“. And Einstein would claim that his theory worked not only for light, but for the entire universe.

The laws of physics are the same in all inertial frames.

The speed of light is a constant in all inertial frames.

An inertial frame is just when you are moving at a constant speed and in a straight direction. It’s not an inertial frame if you turn, or speed up, or slow down.

Also in 1905 Einstein published another article. If space contraction and time dilation were true than everything that was measured in terms of space (distance) and time must also change, that means matter and energy changes, e.g. the mass of an object increases the faster it moves.

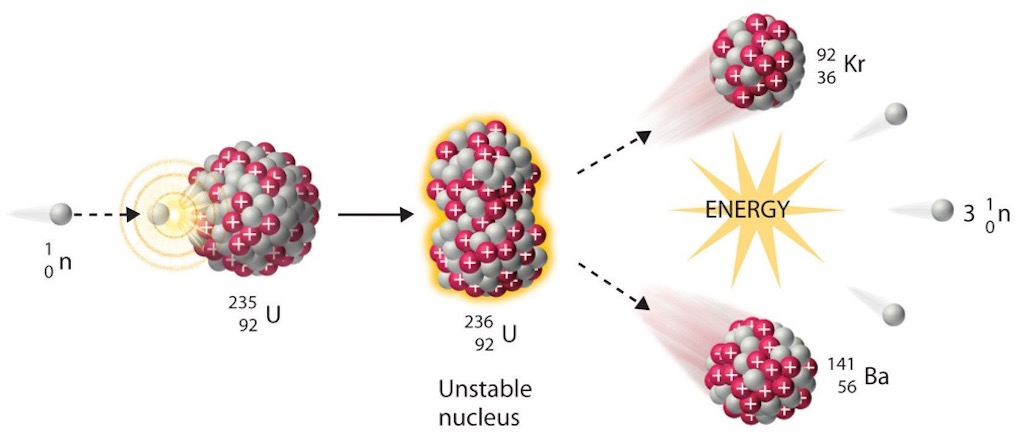

In this second article Einstein postulated that matter and energy were interchangeable, described by the famous E = mc². And this equation clearly implies that even a tiny amount of mass could be release an enormous amount of energy. This explained why Marie Curie had discovered that a small amount of radium emitted heat indefinitely. In fact the radium was losing mass, but it was so small, it could not be measured at that time.

Not content with two articles, Einstein published a third, on the photoelectric effect. Heinrich Hertz, had noticed that a light beam striking metal could, under certain circumstances, produce a small electric current. Philipp Lenard had shown that the energy of the ejected electrons (producing that small electric current) was dependent upon the frequency of the light (i.e. colour), and not its intensity. Einstein explained this using the new “quantum theory” (which is now termed “old quantum theory”), and postulated that if energy occurred in discrete packets, then light must itself be quantised, which would later be called a “photon“, a particle of light. This article earned Einstein the Nobel Prize in 1921.

And, like busses, there was yet another article in 1905, this time on “Brownian Motion“. Which was the first experimental proof of the existence of atoms.

Einstein had published his articles in German in the Annalen der Physik, a leading physics journal (along with French, they were the two main languages of science at that time). But the reality was that Einstein was unknown, worked in a patent office, and the papers were technical and difficult, so initial reaction was very limited. He would have to wait until 1919 (solar eclipse test of general relativity) to become world famous.

It was Hermann Minkowski who concluded that space and time formed a four-dimensional unity. Length, width and height locate any object in space, but time is needed to say when that object was located at that point.

The book does a poor job in moving from this description to simply stating that through symmetry, space and time were just different states of the same object. And then, literally in the same paragraph, it concludes that energy and matter, and electricity and magnetism are related via the fourth dimension, or unified through symmetry.

Over the next page in the book we read that Einsteins equations “remain covariant when space and time are rotated as four-dimensional objects”. Then “The equations of physics must be Lorentz covariant (i.e. maintain the same form under a Lorentz transformation). The book did note, and I still remember it well from my studies in the early 70s, that students find the eight partial differential equations of Maxwell “fiendishly difficult”. But the book did little to help me when reading it in 2026, even it it noted that Minkowski’s new mathematics collapsed Maxwell’s equations down to just two. And it remains equally abstract to me, statements that “the more beautiful an equation is, the more symmetry it possesses”.

So time for some help…

The above video entitled Spacetime rotations, understanding Lorentz transformations, explicitly shows symmetry as a rotation. It connects light speed invariance, and mixes space and time in the spacetime interval. It shows how a changing velocity rotates an observers axes in spacetime.

In the above video we see as the axes for space and time tilt, the coordinates of events change in both time and distance.

The video introduces a quantity that stays fixed. It’s the spacetime “distance” (interval). Even as axes rotate, the spacetime interval is preserved.

The key visual moment is where the transformation is shown as a rotation in spacetime. However, it’s not an ordinary rotation, but a hyperbolic one. This is the symmetry, different observers see different “angles”, but the “spacetime interval” is an invariant quantity, i.e. same for all.

In modern physics, a symmetry is nothing more than a transformation that leaves some quantity unchanged. For example, rotate a sphere and it looks the same, do the same experiment another time, and the laws remain unchanged, change the inertial frame the physics unchanged. The “unchanged thing” is called an invariant.

So invariant is the thing (e.g. a result) that does not change under that transformation. Symmetry is the transformation (e.g. operation, action) under which something does not change.

So for us the transformation is the change in the inertial frame (Lorentz transformation). This changes space and time separately, but the spacetime interval does not change, it is invariant.

A transformation is a symmetry if it leaves a specified quantity invariant. Means that if a quantity remains invariant under a transformation, that transformation is a symmetry of that quantity.

But a quantity can be invariant under one transformation, but not under another different transformation.

So symmetry “allowed change”, and invariant is “what survives that change”.

The entire point of the next video is that different observers can disagree on space and time separately, but they all agree on one combined quantity, the spacetime interval.

I personally did not find particularly intuitive the above video (perhaps because I can’t visualise matrices). It’s entitled The Lorentz Transformations – Intuitive Explanation, and may help the reader understand better.

I kind of enjoyed the fact that everyone says “everything is relative”, when in fact the video shows that not everything is relative, somethings remains invariant.

I guess the key message is that reality is not built out of space and time separately (neither space nor time are invariant). Reality is built out of the combination, spacetime.

The reader may have identified that I am but trying to understand what is written, and not trying explain it to others.

But this discussions has left me wondering …

Every accepted fundamental law of physics is invariant under certain symmetries. For example, Newton’s laws of motion are invariant under shifts in space and time (but not under relativistic transformations), Maxwell’s equations are invariant under Lorentz transformations, and special relativity is built around Lorentz symmetry.

Could it be true that something is a natural/universal law because there is a fundamental symmetry of an invariant quantity? Is symmetry more fundamental than laws themselves? Is symmetry the cause, and are the laws the effect?

However, what appears to be true is that all known fundamental laws respect Lorentz symmetry. That means that all currently accepted fundamental theories are Lorentz covariant, namely Maxwell’s equations, quantum electrodynamics, the Standard Model, and general relativity.

It’s also important to note that there are many very useful “approximate laws” that are very effective. For example Newton’s laws of motion are not Lorentz covariant, but at low speeds are perfectly useful.

The book goes on to mention that it was in 1909 that Einstein (now a professor at Zurich) presented the concept of “duality” in physics.

Above we have a short video entitled “Wave Particle Duality Explained“.

Below we have a more detailed presentation entitled “This is how the wave-particle duality of light was discovered“.

The book also mentions the Solvey Conference of 1911. And below we have a nice summary of this conference.

At the conference Paul Langevin gave a talk on “The Theory of Radiation and Quanta”, where he introduced the high-speed traveller scenario (what later became the twin paradox). It was not presented as a named paradox, but appeared as an illustrative argument supporting relativity. Langevin did not present “twins” in the modern storytelling sense. He simply argued that a traveller will have aged less than those who remained on Earth. It was presented as a real physical consequence, not as a puzzle. But it hit a nerve because it meant that absolute universal time did not exist, and that people would age differently. Langevin’s focus was on the fact that acceleration breaks symmetry, but the whole idea was seen as absurd by many participants.

Below we have a nice “story telling” presentation…

I enjoyed the little side mention that Einstein was nominated for the 1912 Nobel Prize, but certain Nobel laureates sabotaged his nomination. So it was Nils Gustaf Dalén who received the Prize (who?), for his work on improving lighthouses (what?).

That must have felt like a slap in the face, but it did not stop Einstein moving to Berlin in 1914 (his wife did not go with him, but he found someone else, so another example of the effect of spacetime).

Part II - WARPED SPACE-TIME

General Relativity and the Happiest "Thought of My Life"

Einstein’s theory of relativity was about inertial motion, but almost nothing is inertial.

An inertial frame is one in which Newton’s first law holds, i.e. a body with no net force moves in a straight line at constant speed. In Newtonian physics, inertial frames are defined relative to “absolute space” (which is physically unobservable). What comes closest is a freely falling objects (local inertial frames) such as an astronaut in orbit or a falling lift (not perfect because there are weak gravitational fields everywhere). These can be seen as locally inertial because they follow geodesics (natural paths in spacetime).

A geodesic is the path that something follows when it is not being pushed or pulled sideways, but simply allowed to move freely within the space it is in. In flat space, this is just a straight line. But the important idea is that “straight” depends on the space itself. The rule is local, and at each small step, the path does not turn left or right within that space. That is what “straight” really means in geometry.

Now imagine a tiny ant walking on a surface. The ant cannot leave the surface, so it must stay within that world. If the surface is flat, like a table, the ant’s straight path is an ordinary straight line. But if the surface is curved, the ant’s straightest path may look curved to us from the outside. For the ant, however, it is still straight, because it never turns relative to the surface it lives on. That path is a geodesic.

Take a sphere, like the Earth. For an ant walking on the globe, the geodesics are great circles—paths like the equator or the routes airplanes take over long distances. These paths may look curved when drawn on a flat map, but on the sphere itself they are the straightest possible routes. If the ant keeps going without turning, it will naturally follow one of these great circles. This is a clear and clean example of geodesics on a curved surface.

Now consider an open umbrella. The umbrella is also a surface, so mathematically it is a manifold, or a space the ant can walk on. But the umbrella itself is not a geodesic, but more like a stage on which geodesics can exist. If the ant walks forward without turning, it will follow a geodesic on the umbrella just as it would on the sphere. The difference is not in how the ant walks, but in the shape of the surface. On the umbrella, the resulting path may twist or curve in ways that are harder for us to predict, and features like the ribs are not guaranteed to be geodesics. If the ant changes direction, even slightly, then it leaves the geodesic.

So a sphere and an umbrella are both manifolds, i.e. spaces the ant can live on. A geodesic is a particular path on such a space, one that proceeds straight without turning within it. The object (sphere or umbrella) is not the geodesic. The geodesic is the special path traced on that object when nothing forces a deviation.

In reality everything appears to be constantly accelerating. Think wind moving leaves, the Earth orbiting the Sun, etc. Remember, in physics “acceleration” always includes deceleration. And this says nothing about gravity, which was assumed to be instantaneous. So Einsteins theory became the “special theory”, as he looked for a “general theory” that would include gravity.

His key idea was the realisation that as a person falls freely, they will not feel their own weight (an idea Einstein already had as a patent officer in 1907). This meant that gravity would be cancelled out (not disappear) by their acceleration, and the person would appear weightless. Not new, since Galileo Galilei had shown that all objects accelerate at precisely the same rate under gravity (the “equivalence principle“). Newton knew that the planets and their moons were in a state of free fall in their orbits. This meant that the inertial mass is the same as the gravitational mass.

So the laws of physics in an accelerating frame or a gravitating frame are indistinguishable.

Newton had already questioned the idea that gravity could/should bend starlight, but he could not detect (measure) it. Einstein also decided that gravity must also bend light.

Below we have a video presentation of…

So light beams are pulled by gravity, but this did not tell the experimenters about what gravity was.

What was lacking was a field theory of gravity, a kind of gravitational field whose lines of force could support gravitational vibrations that travelled at the speed of light.

“Ehrenfest’s Paradox” is well described in the book. Consider a simple merry-go-around (or spinning disk). At rest we know that the circumference is π times the diameter. However, once set in motion the outer diameter travels faster than the interior. And according to relativity it should shrink more than the interior, distorting the merry-go-around. So the circumference shrinks and will be less than π times the diameter. For this to happen the “surface” of the merry-go-around (or disk) can no longer be flat. Space is curved. Test the idea out on the North Pole. Measure the diameter, and you will discover that the circumference is less than π times the diameter, simply because the Earth’s surface is curved.

A Newtonian would say that a force pulls on something (e.g. Earth) passing near a very large object (e.g. Sun), whilst a relativist would say that space near the very large object is distorted and that affects the movement of that “something”. So gravity can be imagined as pulling on Earth, or space can be seen as pushing the Earth into a circular motion (orbit).

This did not explain what this pull (or push) is, and how it acts instantaneously throughout the universe. Gravity is caused by the bending of space and time, and the “force” of gravity is just a by-product of geometry.

We are not pulled to the Earth’s surface by a force, but pushed to the Earth’s surface by the fact that Earth’s matter (mass) warps space-time around our bodies.

Einstein was helped by Marcel Grossmann (misspelt in the book), to rediscover the special geometry of Bernhard Riemann.

Above is a video about this Riemannian Geometry, which I found fairly incomprehensible (my limitation, not the video’s).

But I think Einstein’s “curved space” just means that distances can vary from place to place in a consistent way. My understand is that in flat space (Euclid) distances between any two points are fixed. Whereas in a smooth curved space, distances depends on where you are, and where you are going. You can imagine a metric that if constant means that you are on a flat surface, and if it changes as you change position (even a very tiny movement) then the surface is curved.

Euclidian geometry defines plane geometry and solid geometry of three dimensions. Today Euclidean space is seen as a good approximation only over short distances or weak gravity.

The geometry is just the rule for measuring a distance that changes from point to point. It is space itself that will define the different straight lines (that actually bend) to go from one place to another, and objects will not feel a force, but will simply move along the “straightest possible path”. This is called geodesics, or the curve representing in some sense the locally shortest path (arc) between two points on a surface (generally called a Riemannian manifold).

I’m not sure if this is a good description, but the line might appear to bend when viewed from a distance, but if you were actually walking along that line, it would appear to you to be straight (and be the shortest distance between departure and destination). You are not forced to take a “bent line”, it’s just that space itself has defined the straight lines, and you take the shortest.

The only thing worth remembering is that Einstein replaced forces with geometry by allowing the definition of distance (the metric) to vary across space and time.

I think differential geometry is a more general mathematical discipline that studies the geometry of smooth shapes and smooth spaces, otherwise known as smooth manifolds. The field has its origins in the study of spherical geometry, and more recently describes the geodesy of the Earth. The simplest examples of smooth spaces are the plane and space curves and surfaces in the three-dimensional Euclidean space. Most prominently the language of differential geometry was used by Albert Einstein in his theory of general relativity, and subsequently by physicists in the development of quantum field theory and the Standard Model of particle physics.

The book tells us that Einstein wanted to generalise the notion of covariance. Special relativity was based upon the Lorentz covariance, i.e. the equations of physics retained the same form under a Lorentz transformation. Now the challenge was to generalise this to all possible accelerations and transformations, not just inertial ones.

Remember the idea was that the laws of physics stayed the same for all observers that are moving with respect to one another within an inertial frame (they remain at rest or in uniform motion relative to the frame until acted upon by external forces). The key property of equations describing these laws is that if they hold in one inertial frame, then they hold in any inertial frame. This condition is a requirement according to the principle of relativity, i.e. all non-gravitational laws must make the same predictions for identical experiments taking place at the same spacetime event in two different inertial frames of reference.

So the idea was that Einstein wanted that the equations retained the same form no matter what frame of reference was used, whether it was accelerating or moving with constant velocity. Whilst each frame of reference requires a coordinate system to measure the three dimensions of space and the time, what Einstein wanted was a theory that retained its form no matter which distance and time coordinates were used to measure the frame. This led to principle of covariance, the equations of physics must maintain the same form under any arbitrary change of coordinates.

The book describes this as a table top covered in a regular mesh representing an arbitrary coordinate system used to calculate its area. No matter how the mesh is twisted, curled or distorted, the calculation of the area of the table top must remain the same.

In hacking through the jargon we come across a “manifold“, which is a topological space that locally resembles Euclidean space near each point. But we must remember that a topological space is, roughly speaking, a geometrical space in which closeness is defined but cannot necessarily be measured by a numeric distance. One-dimensional manifolds include lines and circles, and two-dimensional manifolds are also called surfaces, like a plane and the surface of a sphere.

As usual, people love to create difficult expressions, so what exactly is a topological space? Think of the London Underground, where stations are connected by lines, so you can move from one station to another. Some stations are “close” because they are directly connected, or only a few stops apart. But the map is distorted, there are no meaningful physical distances, stations far apart geographically may look close, and straight lines mean nothing physically. But two stations are “close” if they are connected or easily reachable. So closeness in a topological space is defined by structure (connections), not by numbers (distances).

Another word often used is “tensor“. Firstly, a vector is a generalisation of a single number, that represents a quantity with direction. It cannot be described by a single scalar such as “10 cm”. Vectors have both a magnitude and a direction, such as displacements, forces and velocity. And a vector field is an assignment of a vector to each point in a space, most commonly Euclidean space.

And just to make matter slightly more confusing, there is also a vector space, which is not the same as a vector field. A vector space is a set of rules for adding and scaling vectors. Let’s take a beam under stress. At a specific point inside the beam there are a multitude of possible vectors (e.g. forces, velocities, directions, etc.). This set of all possible vectors forms a vector space. What is actually happening (e.g. the displacement or internal forces at each point) forms a vector field.

Now a tensor is an algebraic object that describes a multilinear relationship between vectors. For example, a tensor can describe how stress acts in different directions within the beam under load. A vector (depending on one direction) is a special case of a tensor (which can involve multiple directions). If we consider the stresses acting on a small volume (a cube) inside the beam, we obtain a tensor. A tensor field then assigns such a tensor to every point in a region of space (typically a Euclidean space or manifold) or of the physical space. In a real beam, stresses vary from point to point (e.g. near supports, near loads, and across surfaces under tension or compression) so the beam is described by a stress tensor field, not a single tensor at one location.

Ricci Curvature

Ricci curvature was not a specific chapter or topic in the book. But for me it was important to try to understand what it meant, because it “severely restricted the possible building blocks used to construct a theory of gravity”.

I’m sure that for those with some mastery of the mathematics, Ricci curvature is almost self-explanatory, but for me I look for a visual explanation (if possible with no equations at all).

So let’s release a small cloud of minute particles in space, and let each particle just fall freely (i.e. no forces at all, only spacetime geometry acting), and watch what happens to the shape of the cloud.

Will the particles follow geodesics, and will the distances between them remain constant so the cloud keeps its shape? This is what we might predict if spacetime was flat, meaning that nearby particles experience identical motion with no relative acceleration (e.g. no differences in gravitational acceleration from point to point).

If the dust particles move toward each other, then the cloud shrinks, and it appears that space is “pulling things together”. This is called positive Ricci curvature.

If the dust particles move apart, then the cloud gets bigger, and it appears that space is “pushing things apart”. This is called negative Ricci curvature.

So Ricci curvature tells whether the volume of a tiny ball of freely falling particles shrinks, grows, or stays the same. This reflects whether nearby particles are being pulled together or pushed apart, not by a force, but by differences in motion from point to point (tidal effects).

What Einstein was looking for was something that tells matter (those minute particles) how spacetime bends. But with strict rules. Firstly it must work in any coordinate system. Secondly, it must be local (no long-range assumptions), Thirdly, it must respect conservation of energy. Remember the idea is that gravity is not a force (the original Newtonian idea), but the curvature of spacetime. Thus flat spacetime means no gravity (locally), and curved spacetime means tidal gravitational effects are present.

Before we move on it’s important to understand that matter-energy creates curvature of spacetime, and that curvature determines motion. So its differences in spacetime (i.e. curvature) that determine how nearby particles move relative to each other. Remember, it is not spacetime itself, but its curvature, that produces observable gravitational effects.

Einstein found two allowed tensors. First is a specific combination of Ricci curvature (the Einstein tensor) that describes how volumes change, and thus how matter focuses or spreads. This is the main gravity term, but remember it describes the geometry not the cause.

The second is the metric, which defines how spacetime measures distance and time between nearby points. A term proportional to the metric (the cosmological constant) represents a uniform property of spacetime itself.

Ricci curvature does not describe all aspects of spacetime curvature, it simply captures only the part that changes volume. Other aspects of curvature, which distort shapes without changing volume, are described by additional components. Ricci curvature is singled out because it is the part that naturally links to matter and satisfies the required conservation laws.

The book goes on to describe three key experiments that were to prove his ideas about space and gravity. The first was shown earlier (The Eclipse of 1919). The second is about red shift (see below).

And the third is the perihelion of Mercury (see below).

The Big Bang and Black Holes

If gravity always attracts then why had the static collection of stars in the universe not already collapsed on themselves? Because the universe was infinite and was full of a uniform collection of stars. Problem solved.

But if the universe was infinite a filled with stars, why was the night sky black?

Later Einstein would conclude that a dynamic universe would never be stable, it would either expand or contract. The cosmological constant would exactly repulse the attractive force of gravity, making the universe static. To get this to work, the constant must be proportional to the volume of the universe, but that meant there was energy in empty space. It’s now called “dark energy“, the energy of pure vacuum.

It was Alexander Friedmann and Georges Lemaître who showed that an expanding universe emerged naturally from Einstein’s equations.

It was Edwin Hubble to first demonstrated that other galaxies existed, and then that the red shift showed that the universe was expanding, according to Hubble’s Law, that the observation in physical cosmology that galaxies are moving away from Earth at speeds proportional to their distance.

Einstein described gravity not as a force but as the curvature of spacetime caused by mass–energy. More or less immediately Karl Schwarzschild found an exact solution showing that if enough mass is compressed into a sufficiently small radius, spacetime curvature becomes so extreme that an event horizon forms, i.e. a boundary beyond which nothing, not even light, can escape. The Schwarzschild radius is the size of the event horizon of a non-rotating black hole (a name given by John Wheeler).

My understanding is that as a massive star collapses, there is no mechanism in classical general relativity to halt the collapse once a critical density is exceeded. The equations predict continued contraction, increasing curvature, and eventual formation of a region causally disconnected from the outside universe.

In 1934 Walter Baade and Fritz Zwicky predicted the existence of neutron stars, as remnants of supernovae. But for ~30 years, there was no observational evidence.

In 1967, Jocelyn Bell Burnell detected extremely regular radio pulses. The pulses had a period of ≈ 1.337 s, and a millisecond-scale pulse width. This object was named a pulsar (pulsating radio source).

A signal that varied on millisecond timescales implies a source that was only a few hundreds of km is diameter. And the regularity implied a rigid rotating object. The strong radio emission, suggested intense magnetic fields.

The most obvious candidate was a rotating, highly magnetised neutron star. So a pulsar is a type (observable manifestation) of neutron star. In fact all pulsars are neutron stars, but not all neutron stars are pulsars.

So the discovery of pulsars established neutron stars as real physical objects. They are extremely compact remnants whose stability is maintained by neutron degeneracy pressure and strong nuclear interactions. Observationally, their masses were found to lie within a narrow range, and theory shows that there is a strict upper limit of order two to three times the mass of the Sun. Beyond this limit no known form of pressure can support the star against its own gravity. The pulsar discovery therefore did more than reveal a new class of object. It fixed, both observationally and theoretically, the point at which matter can no longer remain stable in a compact form.

This shifts the problem. Instead of looking for periodic signals, one looks for systems where a compact object is present but unseen, and where its mass can be inferred through its gravitational influence on a visible companion. In binary star systems emitting strong X-rays, the motion of the visible star can be measured with high precision. From this motion, one can determine the minimum mass of the unseen companion. In several cases, most notably Cygnus X-1, this mass is found to exceed the maximum allowed for a neutron star (~14–21 solar masses).

At that point, the interpretation is no longer a matter of classification but of exclusion. If the object is too massive to be supported by any known pressure, and yet is confined to a region comparable in scale to a neutron star, then gravitational collapse cannot have been halted. General relativity predicted that such a collapse leads to the formation of an event horizon, a region from which no signals can escape. The unseen companion is therefore identified as a black hole.

The identification of black holes as real astrophysical objects completed the picture of gravitational collapse, the way matter could form stable compact remnants up to the neutron star limit, and beyond that threshold, collapse would proceed to a black hole. However, general relativity made a further, more subtle prediction. If massive objects accelerate, particularly in asymmetric configurations such as binary systems, they should produce propagating disturbances in spacetime itself. These are called gravitational waves. For decades, this remained a purely theoretical consequence, with no direct means of detection.

Indirect evidence emerged in the 1970s with the discovery of a binary pulsar by Russell Hulse and Joseph Taylor. Precise timing of the pulsar revealed that the orbit of the system was gradually shrinking, at exactly the rate predicted if energy were being carried away by gravitational radiation (a form of radiant energy similar to electromagnetic radiation). This provided strong confirmation that gravitational waves were physically real, but it remained an inference based on orbital dynamics rather than a direct observation.

The situation changed with the development of laser interferometry, culminating in the construction of the LIGO detectors. These instruments were designed to measure minute changes in distance (in fact far smaller than atomic scales) caused by passing gravitational waves. In 2015, LIGO recorded a transient signal produced by the merger of two black holes. The waveform matched in detail the predictions of general relativity for such an event. Firstly, an inspiral phase, a rapid coalescence, and a final ringdown as the newly formed black hole settled into equilibrium.

This observation completed a long progression. Neutron stars demonstrated that extreme gravitational compression could produce stable compact objects. Black holes extended this to complete gravitational collapse. Gravitational wave detection then revealed that such objects do not merely exist in isolation, but interact dynamically, generating measurable distortions of spacetime that propagate across the universe.

Part III - THE UNFINISHED PICTURE

Unification and the Quantum Challenge

In the 1820s–1830s, Michael Faraday transformed electricity and magnetism from separate curiosities into a unified experimental domain. His decisive breakthrough came in 1831 with the discovery of electromagnetic induction. He used an iron ring with two coils, and showed that a changing current in one coil produces a transient current in the other, even without direct electrical contact. He immediately extended this to a motion-based setup by moving a magnet through a coil or rotating a conductor in a magnetic field. This demonstrated that electrical currents arise from changes in magnetic conditions, not from static configurations. This led directly to the first electric generator (the rotating copper disk).

Faraday, through systematic experiments with magnets and iron filings, introduced the idea of “lines of force”, a continuous structures in space representing the influence of electric and magnetic phenomena. He treated these not as mathematical abstractions but as physically real features of space, capable of tension, curvature, and propagation. His work on electrostatics (e.g. the Faraday cage) reinforced the idea that fields are structured and can be excluded or shaped by materials. Although Faraday used little mathematics, his experimental laws were later formalised by James Clerk Maxwell, who translated Faraday’s physical intuition into the equations of electromagnetism.

Faraday’s central legacy is therefore the replacement of “action at a distance” with local field interaction in space.

From the 1840s onward, Faraday occasionally turned to the question of whether gravity might be related to electromagnetic phenomena. His motivation was consistent with his field-based worldview, i.e. if electricity and magnetism can be unified, perhaps gravity is another manifestation of the same underlying structure. He carried out a series of careful but technically limited experiments designed to detect such a link.

These included attempts to observe whether gravitational motion could induce electrical currents, whether electrical charge altered the apparent weight of bodies, and whether strong magnetic fields influenced gravitational behaviour. He used sensitive balances, charged apparatus, and controlled magnetic environments. In all cases, he could not detect any measurable coupling between gravity and electricity or magnetism. Faraday simply claimed that if a connection existed, it lay beyond the sensitivity of available experiments.

Historically, these experiments are significant because they were one of the first deliberate experimental probes of force unification. Faraday effectively asked a question that would later occupy Albert Einstein for the last 30 years of his life, could all fundamental interactions be reduced to a single underlying field.

I think it’s useful to now sit back and run through the some of the key points that any theory of everything would have to explain.

Modern physics still does not possess a confirmed direct unification of these forces. It is possible that Faraday’s intuition that all forces arise from a common field-like structure was correct in spirit, but he required a framework beyond classical fields, involving quantum or geometric structures he could not access.

My understanding is Einstein from the 1920s onward, was looking for a single continuous field theory in which the two great long-range phenomena then known in fundamental physics (gravitation and electromagnetism) would arise together from one underlying structure of spacetime or geometry. But there was a mismatch. Gravity in Einstein’s theory is universal, and everything with energy and momentum responds to spacetime geometry. So gravity is everywhere.

Electromagnetism was not universal. It acts on electric charge, so neutral things are not affected electromagnetically in the same direct fashion as with gravity.

Electromagnetism was a field living in spacetime, but was not itself the geometry of spacetime in the same way gravity was.

So the problem was to find a single geometric structure rich enough that one part looks like gravity and another part looks like electromagnetism?

How can the same geometric world give rise to both gravity and electromagnetism?

General Relativity had already replaced Newton’s gravitational force with curved spacetime. Massive bodies did not “pull” in the old mechanical sense. Matter shaped spacetime, and bodies followed the structure of that geometry. Einstein wanted electromagnetism to be built into geometry, rather than being an additional, separate force acting in parallel. This was his unified field. We have to remember that Maxwell’s theory of electromagnetism had already unified electricity, magnetism, and light into one field framework, and Einstein had unified gravitation with spacetime geometry. So it was natural to think that nature was not built from disconnected pieces, but from one deeper order.

For Einstein, however, this was not simply a matter of combining two known forces. He wanted that the fundamental laws of physics should contain no arbitrary elements. In General Relativity, once the basic principles were fixed, the structure of the theory followed with a kind of necessity, i.e. gravity was not added as a force, but emerged from the geometry itself. Einstein wanted the same inevitability for electromagnetism. It should just be another aspect of a single, underlying geometric structure of spacetime. He wanted that all known interactions would be just different facets of one framework, with no need for separate postulates.

Einstein was not working within the emerging framework of quantum mechanics, which he regarded as incomplete and conceptually unsatisfactory. He did not attempt to incorporate probabilistic behaviour, measurement theory, or the later quantum description of particles and forces. Nor was he trying to unify the strong and weak nuclear forces, which were not yet formulated in their modern form. His unified field programme remained a classical field theory, concerned primarily with gravity and electromagnetism, and grounded in continuous structures rather than discrete quanta. I understand he was convinced that a deeper, more coherent description of nature should ultimately replace the quantum framework that was emerging.

The first really important attempt to go beyond General Relativity was made by Hermann Weyl in 1918.

Weyl saw that Riemannian geometry, the geometry underlying General Relativity, might not be general enough. In Einstein’s spacetime, lengths can be compared consistently from point to point. Weyl imagined a geometry in which not only direction but also scale could vary from place to place in a more active way. Instead of asking how to place electromagnetism into Einstein’s geometry, Weyl asked whether the geometry should be made richer so that electromagnetism appears naturally as one of its aspects.

This is historically very important because it introduced, in embryonic form, the idea of gauge invariance, which later became central to modern physics. But in Weyl’s original form, Einstein had a problem with the fact that in Weyl’s geometry, the length of an object could depend on its history through spacetime. Einstein argued that this would mean physical standards, such as the frequencies of atoms or the lengths of rods, would not remain stable. In effect, the theory seemed to imply that identical atoms would not behave identically after moving through different paths in a field.

Einstein rejected Weyl’s original theory as a description of nature, but he continued explore the idea to enlarge geometry.

Theodor Kaluza, in 1921, proposed a different idea. Do not change geometry within four-dimensional spacetime, but instead, enlarge spacetime itself by one extra dimension. If the world is really five-dimensional rather than four-dimensional, then the geometry of that larger world may look, from our limited four-dimensional viewpoint, like both gravity and electromagnetism together. Einstein was interested because Kaluza’s approach suggested a very clean kind of unity. Gravity and electromagnetism might not be different structures at all, but just different visible parts of one higher-dimensional geometry.

Later Oskar Klein suggested that perhaps the extra dimension is real but extremely small and effectively hidden. That would explain why we do not directly see five-dimensional motion in daily life. Kaluza’s theory was suggestive, but it did not explain why the world had that structure.

Also, it remained classical, and did not include the quantum world that was rapidly becoming central to physics. Einstein believed that quantum mechanics was a powerful but incomplete formalism, and that a deeper, unified field description might restore a more fully intelligible picture of nature. He was repeatedly searched for a primitive structure of spacetime from which known physics could emerge.

In General Relativity, the metric tensor captured all the geometric and causal structure of spacetime, and was used to define notions such as time, distance, volume, curvature, angle, and separation of the future and the past. But geometry also contained the way directions were transported from point to point, or how one defined straightness and parallel movement locally.

Here I’m beyond my capacity to understand the concepts and mathematics, but its worthing trying to isolate some key elements. No guarantee.

From 1923 onwards Einstein studied “post-Reimannian” geometries, and the narrower “theory of connections“, with the question how a direction or vector changes when moved through spacetime. This meant instead of defining geometry by distances, you define it by transport, curvature and parallelism rules. Instead of saying “distance defines geometry”, he asked “how vector changes defines geometry”.

Affine approaches shift emphasis toward that second aspect. They explore whether the deeper reality might lie not primarily in the metric idea of length and angle, but in a more primitive notion of connection, direction, and transport. Affine geometry is often considered as the study of parallel lines.

Einstein became interested in whether one could start from a more general connection-like structure and recover both gravity and electromagnetism from it.

Teleparallelism (Fernparallelismus), was one of Einstein’s later major attempts around the late 1920s and afterward (in the book I think these are referred to as “torsion” and “twisted spaces”. As I understand things, in ordinary curved spacetime, parallelism is local and subtle. Move around in curved space and the comparison of directions depends on the path. Teleparallelism tries to build a different global structure of parallelism into the manifold.

Einstein hoped that this richer structure might encode more than gravity alone. Perhaps gravity and electromagnetism could appear as different features of a geometry with both curvature-like and torsion-like aspects. So Einstein shifted from extra dimensions, to a different kind of geometric scaffolding in spacetime itself. But (as far as I can tell) Einstein never obtained a physically compelling, unique, and convincing theory from it. It did not produce a clear and broadly accepted derivation of the known world. As Einstein kept searching for unified field theories, the centre of physics moved towards quantum mechanics, quantum electrodynamics, and nuclear and particle physics.

The book turns to the work of Satyendra Nath Bose.

In the early 20th century, Albert Einstein was already deeply engaged with the emerging quantum theory of light and matter. In 1905, he had shown that light could behave as discrete packets of energy (now call photons), extending Max Planck’s earlier work on quantised radiation. The Planck postulate was that electromagnetic energy could be emitted only in quantised form, in other words, the energy could only be a multiple of an elementary unit:

where h is the Planck constant, also known as Planck’s action quantum (introduced already in 1899), and ν is the frequency of the radiation.

Over the following two decades, Einstein continued to explore how large collections of particles behave when quantum effects are taken seriously. His focus was not just on individual particles, but on how ensembles of identical particles distribute themselves among possible energy states, especially at very low temperatures where classical physics begins to fail.

In 1924, Satyendra Nath Bose suggested to treat particles as truly indistinguishable, rather than as distinguishable individuals (as classical physics did). In his view, it made no sense to track “which particle is which”. Only the number of particles in each energy state mattered. This seemingly small change completely altered the statistical rules. When Einstein read Bose’s paper, he extended Bose’s method from light (photons) to atoms.

What emerged from this combined insight was something entirely new. Bose’s approach implied that certain particles, now called bosons, do not resist sharing the same state. Remember that there are two fundamental classes of subatomic particle, bosons and fermions. It was Paul Dirac who coined the term boson to classify the fundamental particles that obey Bose–Einstein statistics.

The basic idea was that as the temperature is lowered (very, very close to absolute zero, e.g. nano-kelvin), the usual spread of particles across many different energies begins to collapse. Instead of each particle behaving independently, more and more of them accumulate in the lowest possible energy state. Einstein realised that, under the right conditions, this would not be a small effect but that a large fraction of all the particles in a system would suddenly occupy the same state.

In simple terms, what Bose proposed, and Einstein developed, was that as a suitable collection of particles were cooled down, they would stop behaving like a crowd of separate individuals and begin to act as a single, unified entity. Rather than many tiny, independent atoms moving randomly, you get a system where all those atoms share the same quantum “identity”, moving together as one. This was a purely theoretical prediction at the time, but it described a completely new state of matter (now call a Bose–Einstein condensate) where the boundary between individual particles effectively disappears and the whole system behaves like one coherent quantum object.

It is my understanding that cold-atom interferometers already measure acceleration and rotation, the exact quantities needed for GPS-independent navigation (i.e. no jamming, almost no drift). Prototypes exist, so a self-contained navigation unit that does not need GPS might be possible in 10-20 years.

Wave-particle duality in physics emerged as way to reconcile experimental facts that resisted classical categorisation. In the 19th century, light was firmly established as a wave phenomenon through the work of Thomas Young (interference, 1801) and James Clerk Maxwell (electromagnetic waves, 1860s). However, at the turn of the 20th century, phenomena such as black-body radiation and the photoelectric effect could not be explained within a purely wave framework. In 1900, Max Planck introduced the idea that energy is exchanged in discrete quanta. In 1905, Albert Einstein extended this by proposing that light itself could behave as if it were composed of localised packets of energy (later called photons). This was a recognition that light exhibited both wave-like and particle-like properties depending on the experimental context.

The decisive conceptual shift came with Louis de Broglie in 1924. De Broglie proposed that the duality observed in light was not unique. If radiation (traditionally a wave) could exhibit particle-like properties, then matter (traditionally particles) might exhibit wave-like properties. He introduced the hypothesis that any particle with momentum p is associated with a wavelength λ=h/p, where h is Planck’s constant. This implied that electrons, atoms, and in principle all matter possess an intrinsic wave character. Importantly, de Broglie initially derive this from a symmetry argument, an extension of the emerging quantum principles suggesting that nature does not maintain a strict division between waves and particles.

It’s a shame that the book does not mention that this hypothesis was rapidly confirmed experimentally. In 1927, Clinton Davisson and Lester Germer observed diffraction patterns when electrons were scattered off a nickel crystal, precisely as waves would behave.

Independently, George Paget Thomson demonstrated similar effects using electron beams passing through thin metal films. These results provided direct, quantitative confirmation of de Broglie’s relation and established matter waves as a physical reality, not a mathematical abstraction. The implication was that particles do not simply travel along trajectories in space, their behaviour must be described by wave-like distributions that encode probabilities of detection.

The broader theoretical framework was developed almost immediately afterward. In 1926, Erwin Schrödinger formulated wave mechanics, in which the evolution of a system is described by a wavefunction obeying the Schrödinger equation. This wavefunction incorporates de Broglie’s wavelength concept at a fundamental level. However, the interpretation of this “wave” was not classical. Through the work of Max Born, the wavefunction did not represent a physical oscillation in space, but rather a probability amplitude. Duality therefore does not mean that a particle is sometimes a wave and sometimes a particle in a classical sense, rather, quantum objects are described by a single formalism that produces wave-like interference effects and particle-like detection events.

The notion of duality found its first coherent conceptual framework in the work of Niels Bohr, who, in the mid-1920s, introduced the principle of complementarity. Bohr did not attempt to resolve the wave–particle contradiction, instead, in his view, experimental arrangements determine which aspect, either wave-like or particle-like, can be observed, but never both simultaneously in full detail. This was grounded in the emerging formalism of quantum mechanics and reinforced by the growing body of experiments. The electron, for example, could produce interference patterns (wave behaviour) in one setup, yet appear as a localised impact (particle behaviour) in another. So Bohr’s established duality as a principle, i.e. physics could no longer describe “what the electron is”, but only “what can be observed under specific conditions”. This interpretation became a cornerstone of what is now called the Copenhagen interpretation.

At almost the same time, the abstract mathematical structure of quantum theory was being rapidly developed, culminating in the work of Paul Dirac. In 1928, he formulated a relativistic wave equation for the electron, combining quantum mechanics with special relativity in a way that neither Erwin Schrödinger nor Werner Heisenberg had achieved. The Dirac equation naturally incorporated spin as an intrinsic property of the electron and predicted the correct magnetic moment.

In solving his equation, Dirac encountered solutions corresponding to negative energy states. Rather than discarding them as unphysical, he interpreted them as representing a new type of particle. One that was identical in mass to the electron but with opposite charge. This was the first theoretical prediction of antimatter, later confirmed experimentally with the discovery of the positron in 1932 (by Carl David Anderson who observed the positron in cosmic ray experiments). Dirac’s work showed that duality was not merely about wave-like behaviour in space, but about a deeper structure in which particles are excitations of relativistic quantum fields, with symmetry between positive and negative energy solutions.

Again I found it a shame that experimental grounding of these abstract ideas merits only 6 lines. One of the most decisive early demonstrations came from the Otto Stern–Walther Gerlach experiment of 1922. They demonstrated that a beam of silver atoms passing through a non-uniform magnetic field split into discrete components rather than forming a continuous distribution. This result could not be explained by classical physics, which would predict a smooth spread of orientations. Instead, it revealed that angular momentum (and later understood, spin) is quantised. While not a direct test of wave behaviour like electron diffraction, the Stern–Gerlach experiment reinforced the same underlying principle that microscopic entities do not occupy a continuum of classical states but exist in discrete, quantised configurations that only manifest upon measurement.

In 1905, Albert Einstein derived his famous relation establishing that mass is a form of energy. However, in the relativistic framework used by Dirac, the full energy–momentum relation is more complete, and admits both positive and negative energy solutions (as a physical necessity of relativistic symmetry). In this formulation particles can transform into pure radiation and back, governed by relativistic quantum laws.

When a particle meets its antiparticle, they can annihilate, converting their mass entirely into energy, typically gamma-ray photons.

Conversely, sufficiently energetic photons can produce particle–antiparticle pairs. Pair production, demonstrates that mass is not a conserved substance but a manifestation of energy under specific conditions.

The transition from de Broglie’s matter waves and Erwin Schrödinger’s wave equation to a fully consistent physical interpretation required a decisive conceptual step, which was provided by Max Born in 1926.

Born proposed that the wavefunction ψ, central to Schrödinger’s formulation, did not describe a physical wave in space (like a water wave or electromagnetic field), but rather a probability amplitude (see the useful video below). The measurable quantity is the probability density of finding a particle at a given location upon measurement. This interpretation was first applied to scattering problems and was immediately successful in matching experimental results.

Born’s insight resolved a critical ambiguity, namely that de Broglie’s “matter waves” could not be literal oscillations of matter spread out in space, because particles are always detected as localised events. By reinterpreting the wave as encoding probabilities, Born preserved the wave-like mathematical structure while aligning it with the discrete, particle-like outcomes observed experimentally. This marked a fundamental shift from deterministic trajectories to intrinsically probabilistic predictions.

Closely connected to this development was the independent formulation of quantum mechanics by Werner Heisenberg in 1925. Heisenberg’s matrix mechanics avoided having to visualise waves altogether and instead focused on observable quantities, such as transition frequencies and intensities. A transition frequency was just the frequency of radiation emitted or absorbed when an atom moved between two energy states. Primarily this was electronic transitions (visible / UV / optical spectra), e.g. hydrogen spectral lines (Balmer series, etc.). Intensity was the probability (or rate) that a specific quantum transition occurs, observed experimentally as the brightness of the corresponding spectral line.

The transition frequencies and intensities were represented by non-commuting mathematical objects (matrices). From this formalism, and in parallel with the probabilistic interpretation introduced by Born (with whom Heisenberg was closely associated in Göttingen), emerged the uncertainty principle, formulated in 1927. The principle stated that certain pairs of physical quantities, most famously position (x) and momentum (p) cannot be simultaneously known with arbitrary precision. Quantitatively, their uncertainties satisfied a lower bound set by Planck’s constant (ℏ).

ΔxΔp≥ℏ/2

This relation is not a statement about measurement imperfections or technological limitations. It arises directly from the mathematical structure of quantum mechanics, specifically the non-commutativity of the operators corresponding to position and momentum.

If a quantum state is constructed to be highly localised in space (small Δx), it must necessarily involve a wide range of momentum components (large Δp), because the wavefunction is built from superpositions of waves with different wavelengths (via Fourier analysis). Conversely, a well-defined momentum corresponds to a long, spread-out wave, implying large positional uncertainty. Thus, the uncertainty principle is a structural consequence of representing physical systems as wave-like probability amplitudes.

The deeper meaning of Heisenberg’s uncertainty principle lies in its rejection of classical determinism. In classical mechanics, a particle can, in principle, have a precisely defined position and momentum at all times, allowing exact prediction of its future trajectory. In quantum mechanics, this is fundamentally impossible. The uncertainty principle therefore limits not only measurement but also the very existence of simultaneously well-defined classical properties.

By the mid-1920s, quantum theory had split, at least intellectually, into two contrasting camps. On one side stood Niels Bohr, Werner Heisenberg, and Max Born, who accepted that the new theory imposed an intrinsic limit on what could be known, i.e. physical systems were to be described in terms of probabilities, with measurement playing a defining role in what becomes real. But figures such as Albert Einstein and Erwin Schrödinger resisted this conclusion, and sought a deeper, underlying description in which the apparent randomness would be replaced by hidden structure and determinism. Both sides accepted the same equations and data, so their differences was about what those results meant.

The fundamental question was, and maybe still is, whether quantum mechanics is a complete description of reality, inherently probabilistic at its core, or an effective theory masking a deeper deterministic layer.

This tension was sharpened in 1935 by Erwin Schrödinger, who introduced a concrete thought experiment to expose the consequences of taking the quantum formalism at face value. He imagined a sealed box containing a cat, a small amount of radioactive material, a Geiger counter, and a mechanism that would release poison if a decay event were detected. The setup is arranged so that within a given time interval there is a 50% probability that one atom decays and a 50% probability that it does not. If the atom decays, the detector triggers the release of poison and the cat dies. if it does not, the cat remains alive. According to quantum mechanics, before observation the radioactive atom is described by a superposition of “decayed” and “not decayed” states. Because the detector and poison mechanism are physically coupled to the atom, the entire system evolves as a single quantum state. The combined system must therefore be described as a superposition of two correlated outcomes, one in which the atom has decayed (the cat is dead), and another in which the atom has not decayed (the cat is alive). Only when the box is opened and a measurement is made does the system appear in one definite state. Schrödinger’s purpose was to demonstrate that if the wavefunction is taken as a complete description of reality, then superposition must extend from microscopic systems to macroscopic objects, leading to conclusions that conflict with ordinary experience. The experiment therefore forces a choice, either quantum mechanics is incomplete, or the process by which definite outcomes emerge from superpositions requires further explanation beyond the standard formalism.

This argument was developed in parallel with the Einstein–Podolsky–Rosen (EPR) paper of the same year, which also questioned the completeness of quantum mechanics. Together, these critiques forced the issue into the open, the problem was no longer how to calculate quantum phenomena, but how to understand what the theory claims about reality itself.

The book stresses this point, with the question “does a tree fall in a forest, if no one hears it”. The Copenhagen School would argue that the tree exists in all possible states (fallen, upright, mature, burnt, rotten, …) until it is observed, whereupon it then exists. Quantum theory argues that it is the act of observing the tree that determines its state.

The EPR paper was not experimental but logical. They considered a pair of particles that have interacted and then separated, such that their physical properties are strongly correlated. For example, if the total momentum of the pair is known to be zero, then measuring the momentum of one particle immediately determines the momentum of the other, no matter how far apart they are. Similarly, by choosing instead to measure the position of one particle, one can infer the position of the other. EPR introduced a criterion of physical reality, “If one can predict with certainty the value of a physical quantity without disturbing the system, then that quantity corresponds to an element of reality”.

Using this, they argued that the distant particle must simultaneously possess definite values of both position and momentum, since either can be predicted depending on what measurement is performed on the first particle. However, quantum mechanics does not allow both quantities to be simultaneously well-defined. Therefore, quantum mechanics is not a complete description of reality.

Niels Bohr did not deny the correlations identified in EPR, instead, he rejected the assumption that physical properties such as position and momentum exist as well-defined attributes independent of the experimental arrangement. In Bohr’s framework, a “physical quantity” is not an intrinsic property carried by a system in isolation, but one that is defined only within a specific measurement context. To measure position, for example, one must use an apparatus that fixes spatial localisation, which necessarily disturbs or leaves undefined the momentum. Conversely, a momentum measurement requires an arrangement incompatible with precise localisation.

These are not practical limitations but mutually exclusive experimental conditions embedded in the structure of quantum theory. Therefore, when EPR argued that one can assign simultaneous reality to both position and momentum of the distant particle (because either can be predicted), Bohr counters that each prediction refers to a different experimental context, and these contexts cannot be combined into a single, coherent description of reality. The act of measurement is thus inseparable from the definition of the quantity because it is the measurement arrangement itself that gives meaning to what is being measured.

The EPR argument remained a conceptual challenge until 1964, when John Stewart Bell transformed it into a precise, testable statement. Bell considered theories in which measurement outcomes are determined by underlying variables (often called “hidden variables”) and in which no influence can propagate faster than light (locality). Under these assumptions, he derived constraints, now known as Bell inequalities, on the statistical correlations that can be observed between measurements performed on two spatially separated but entangled particles. These inequalities do not depend on the detailed mechanism of the hidden variables, and they follow purely from the joint requirements of locality and realism.

Quantum mechanics predicts correlations that violate these inequalities. In particular, for suitably chosen measurement settings (for example, different orientations of spin measurements on entangled particles), the theory yields stronger correlations than any local hidden-variable model can produce. This arises from the structure of entangled states, in which the joint system is described by a single wavefunction that cannot be factorised into independent states of each particle. The correlations are therefore not imposed at the moment of measurement, nor carried by pre-existing local properties, but are intrinsic to the quantum description of the system as a whole.

Alain Aspect and collaborators performed experiments using pairs of entangled photons emitted from atomic cascades. They measured polarisation correlations at different detector settings while ensuring that the choice of measurement on one side could not influence the other within the time allowed by the speed of light. The observed correlations violated Bell inequalities in agreement with quantum predictions. Later experiments have refined these tests, closing successive “loopholes” (such as detector efficiency and communication between measurement devices), but the essential result has remained unchanged. Bell’s work thus converted the EPR debate from a philosophical dispute into an empirical question, with experiments strongly favouring the non-classical structure of quantum theory.

War, Peace and ...

This is a chapter that focuses on Einstein leaving Germany, and moving to the US.

Frankly, by now I was having problems following the science in the last chapter, and did not have much enthusiasm for a chapter on Einstein’s life in the US.

Einstein's Prophetic Legacy

I don’t intend to look into this final chapter. My reason is simple, if I’m going to look into his legacy I will buy a book on the subject, and not read a 28-page closing chapter. Will I buy that book, probably not…